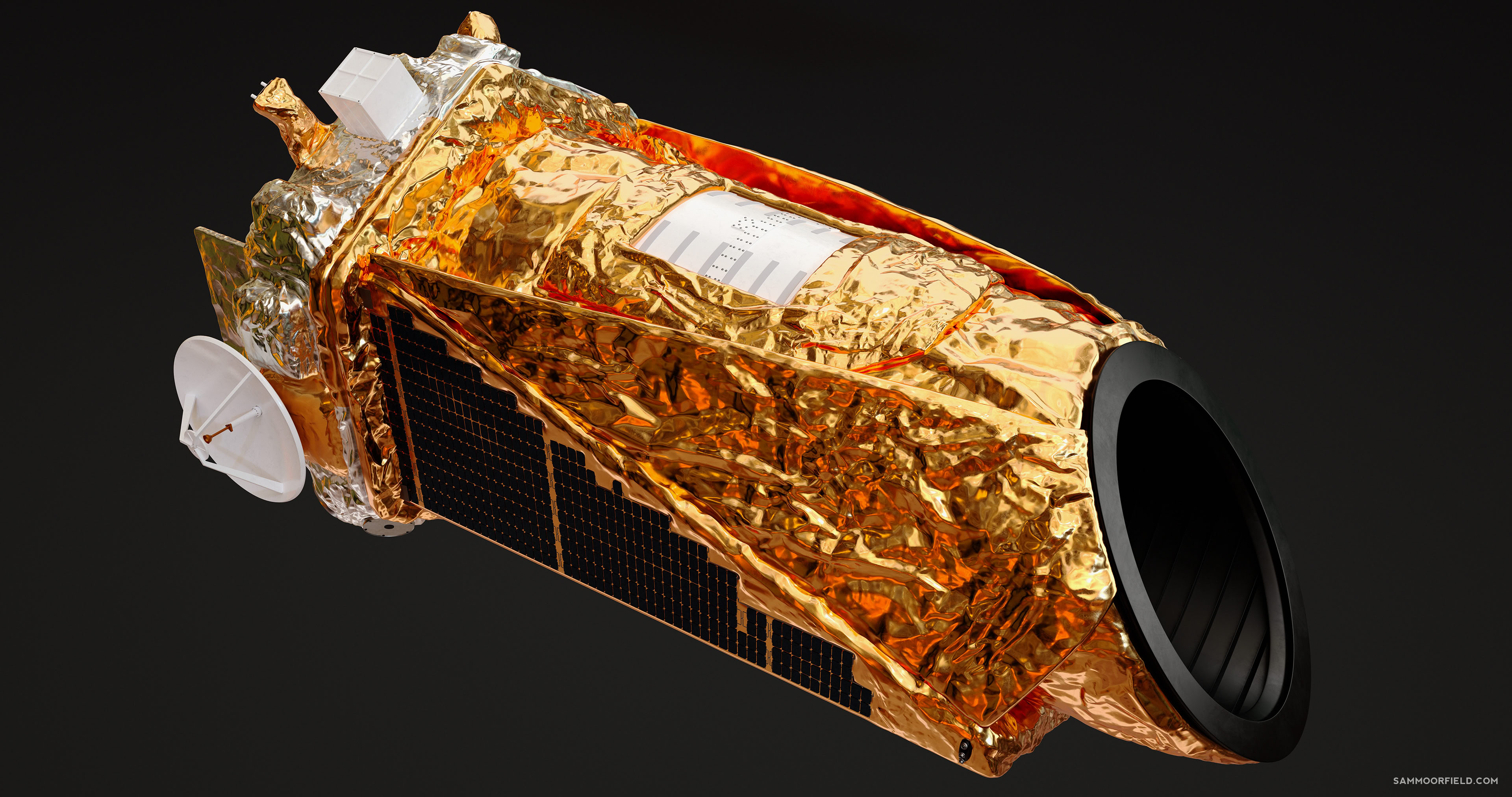

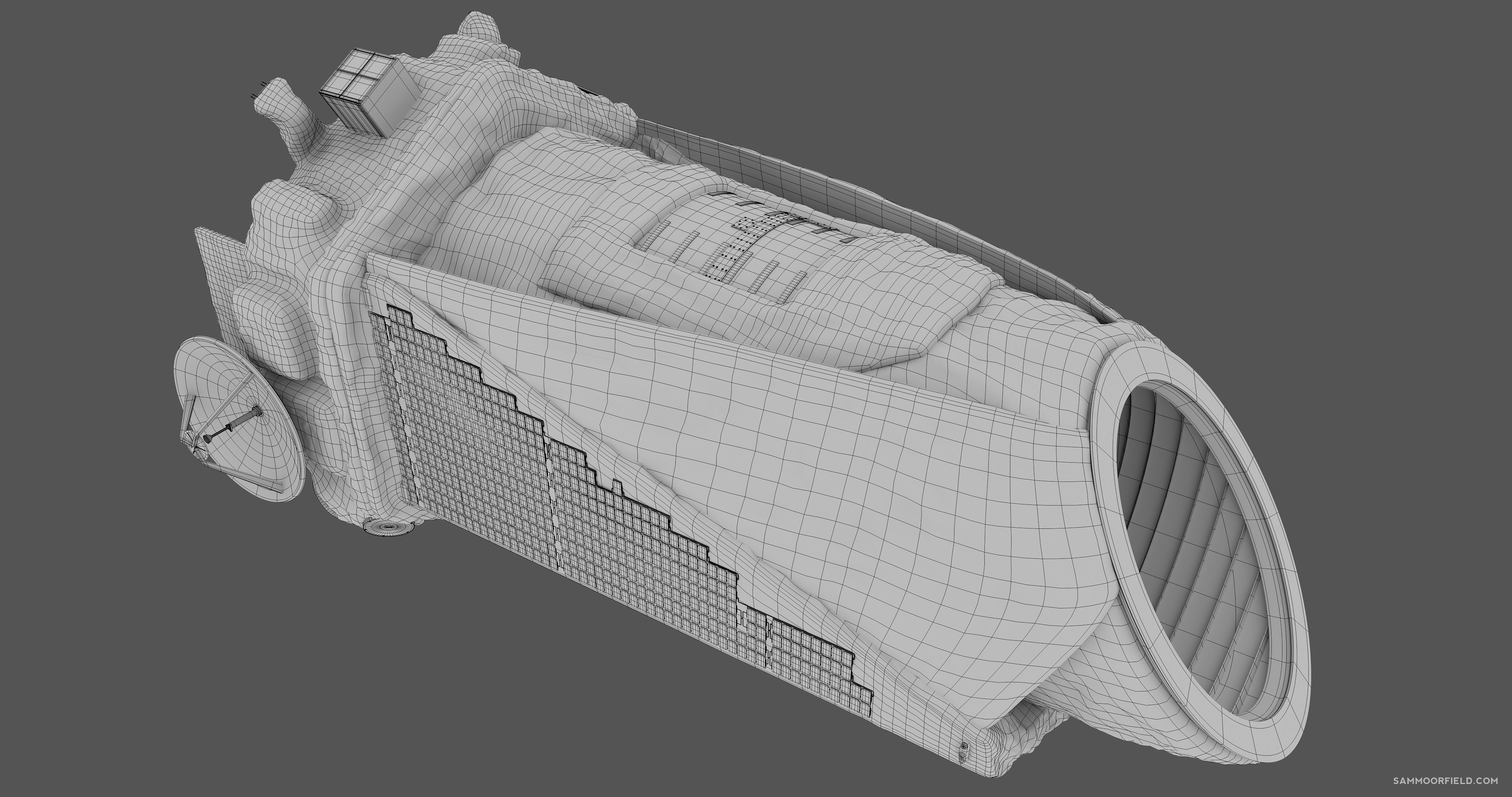

Hi, I'm Sam Moorfield, a digital artist based in Australia.I've spent the past 19 years creating CGI and VFX for film and television.[email protected]

CGI Showreel Notes

Close

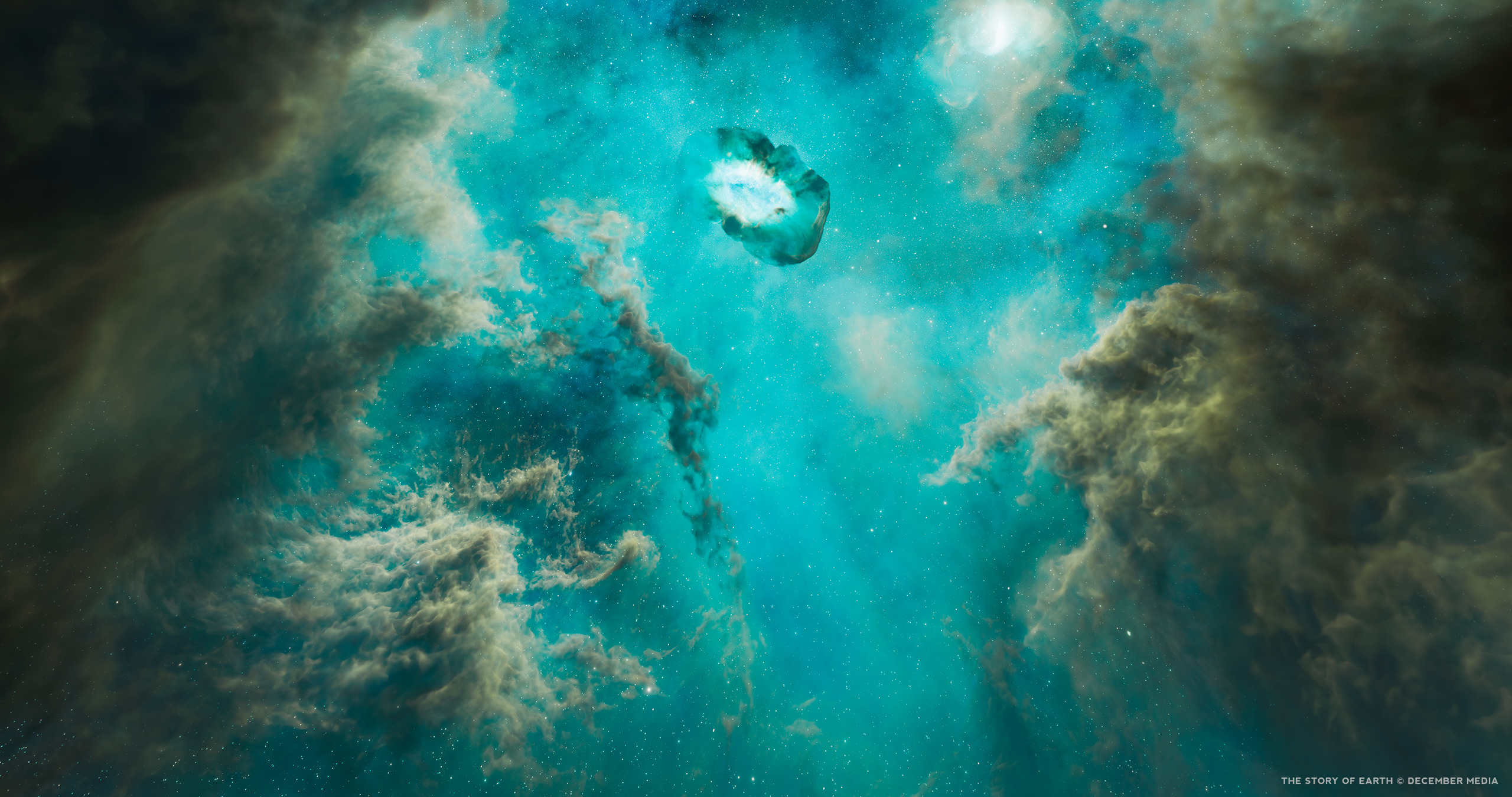

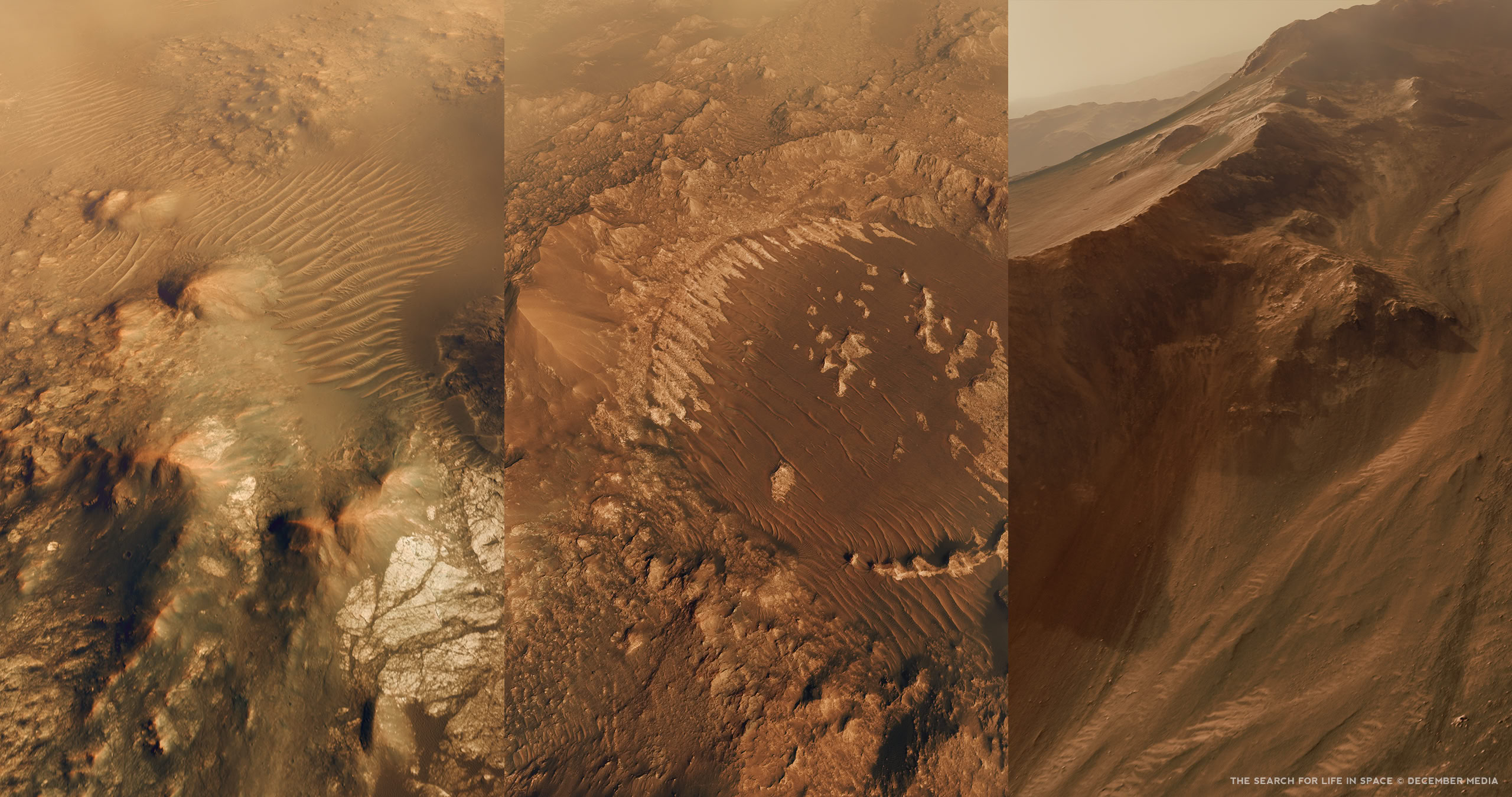

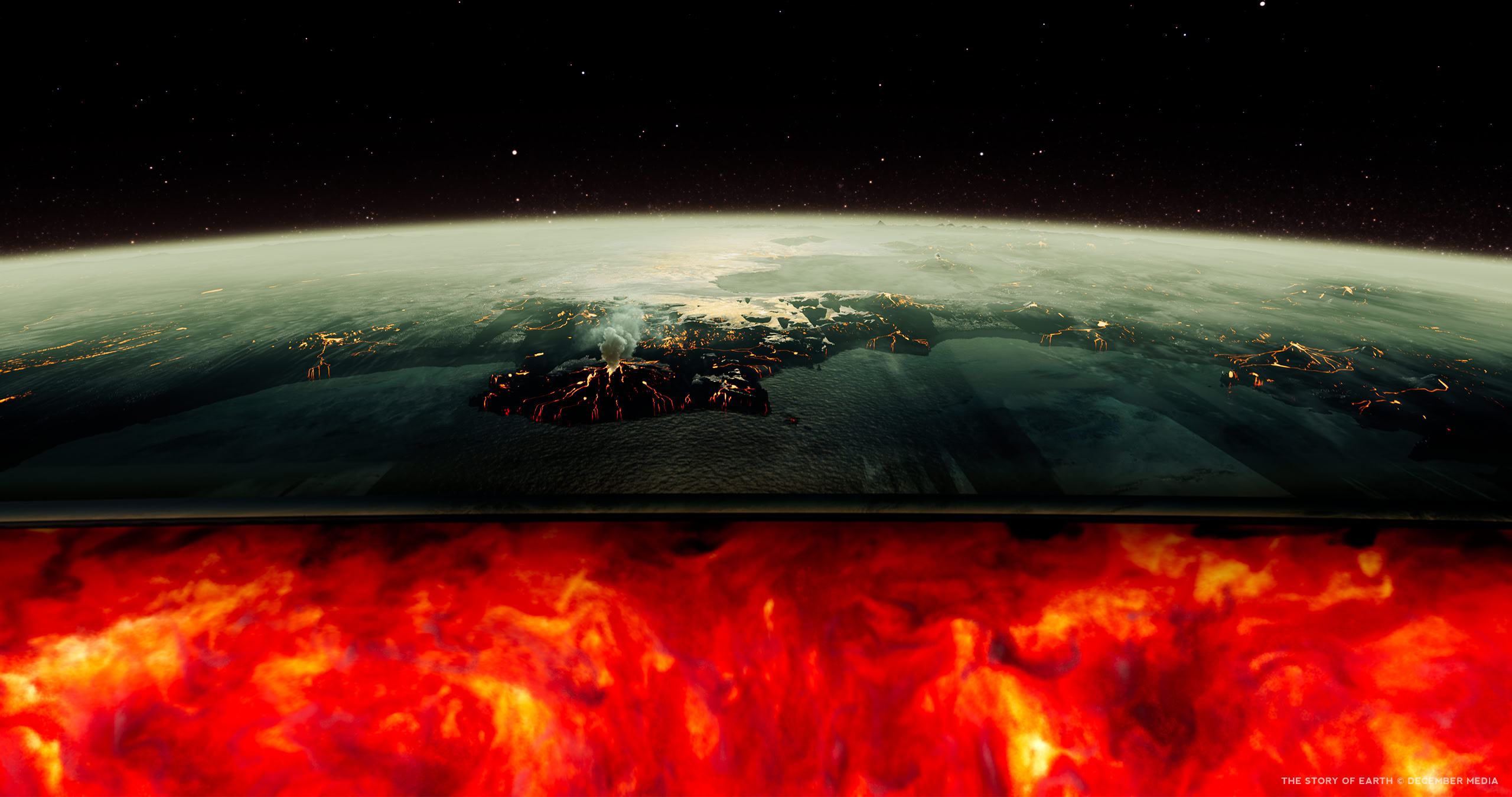

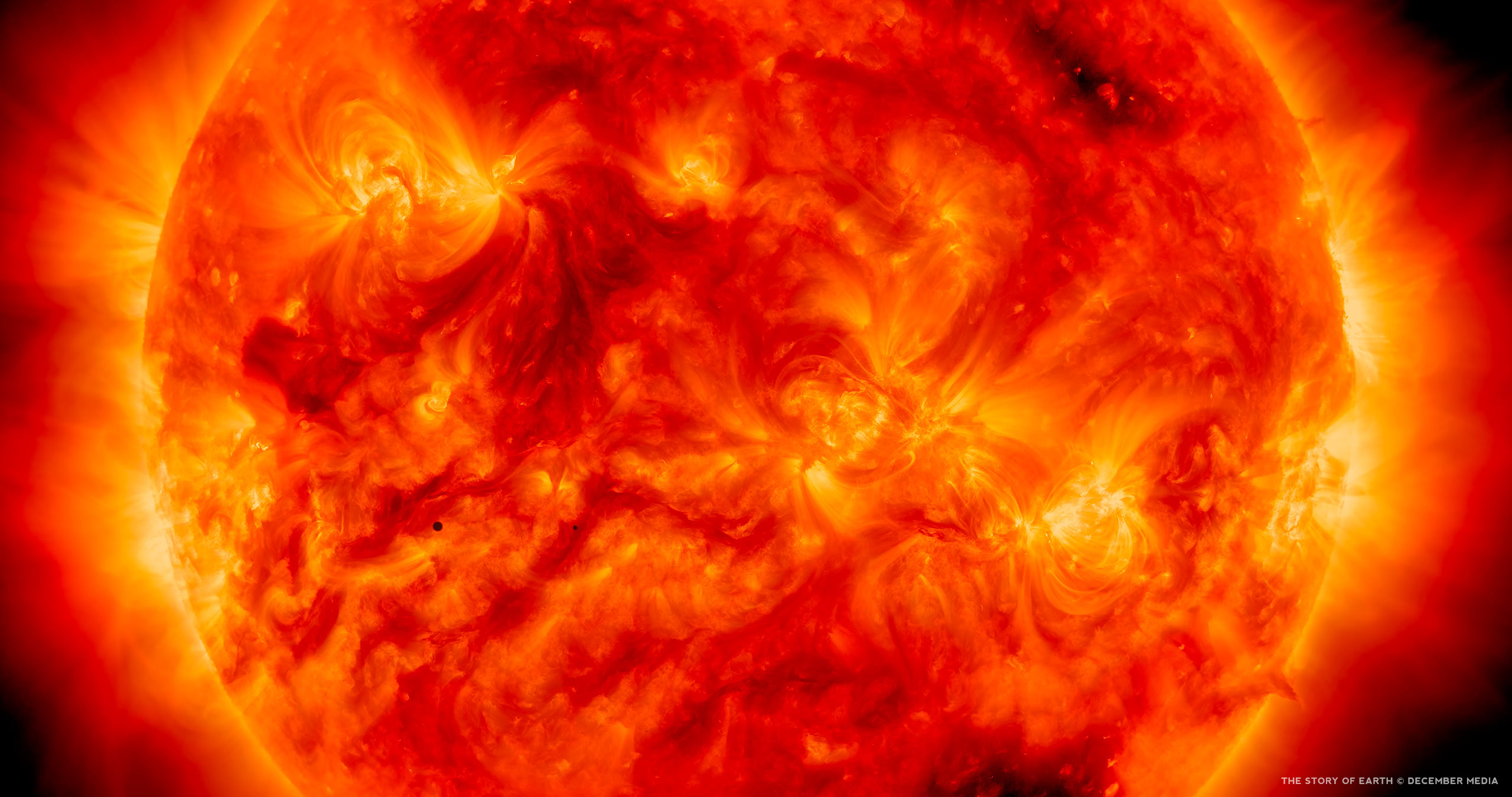

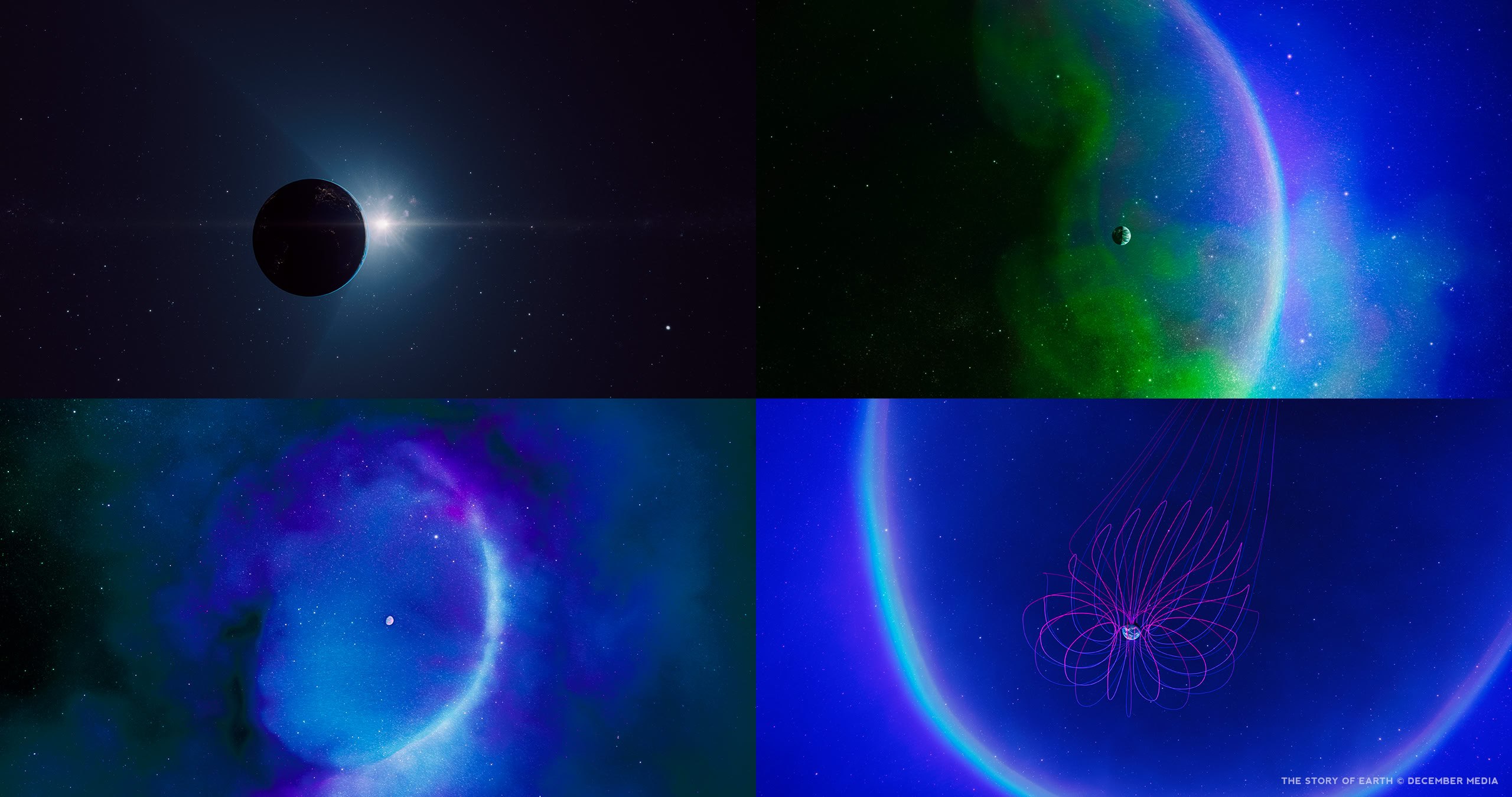

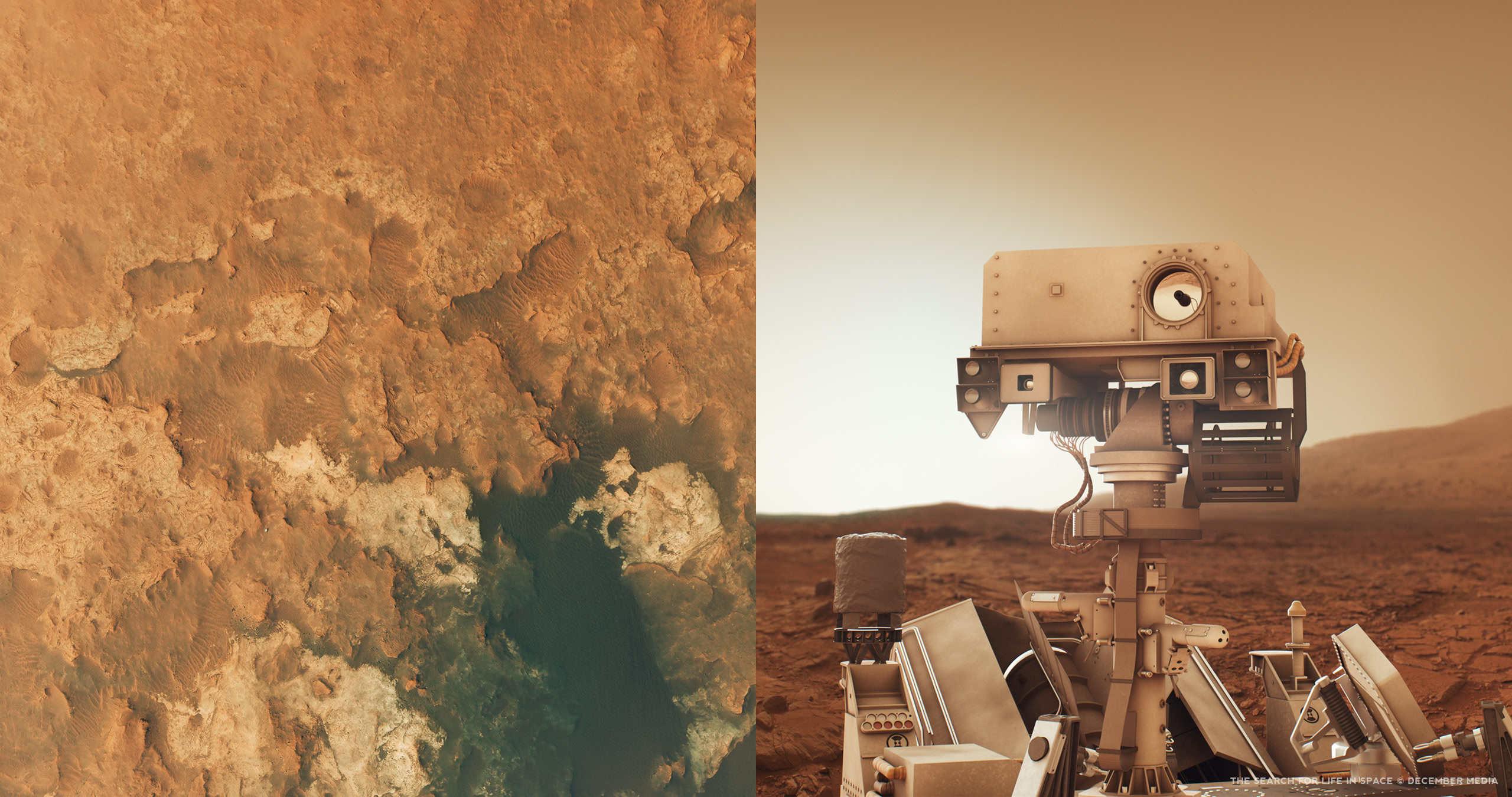

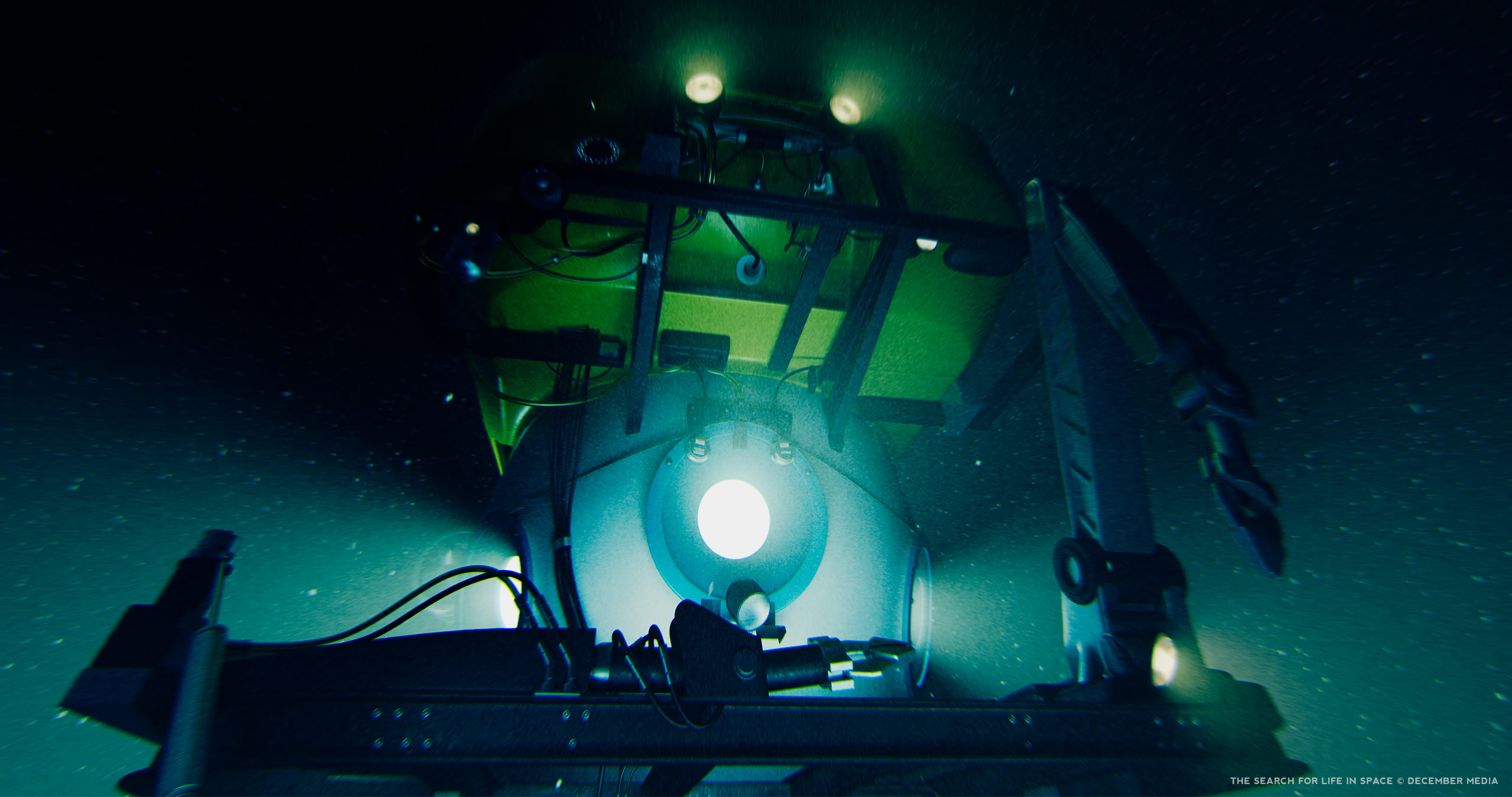

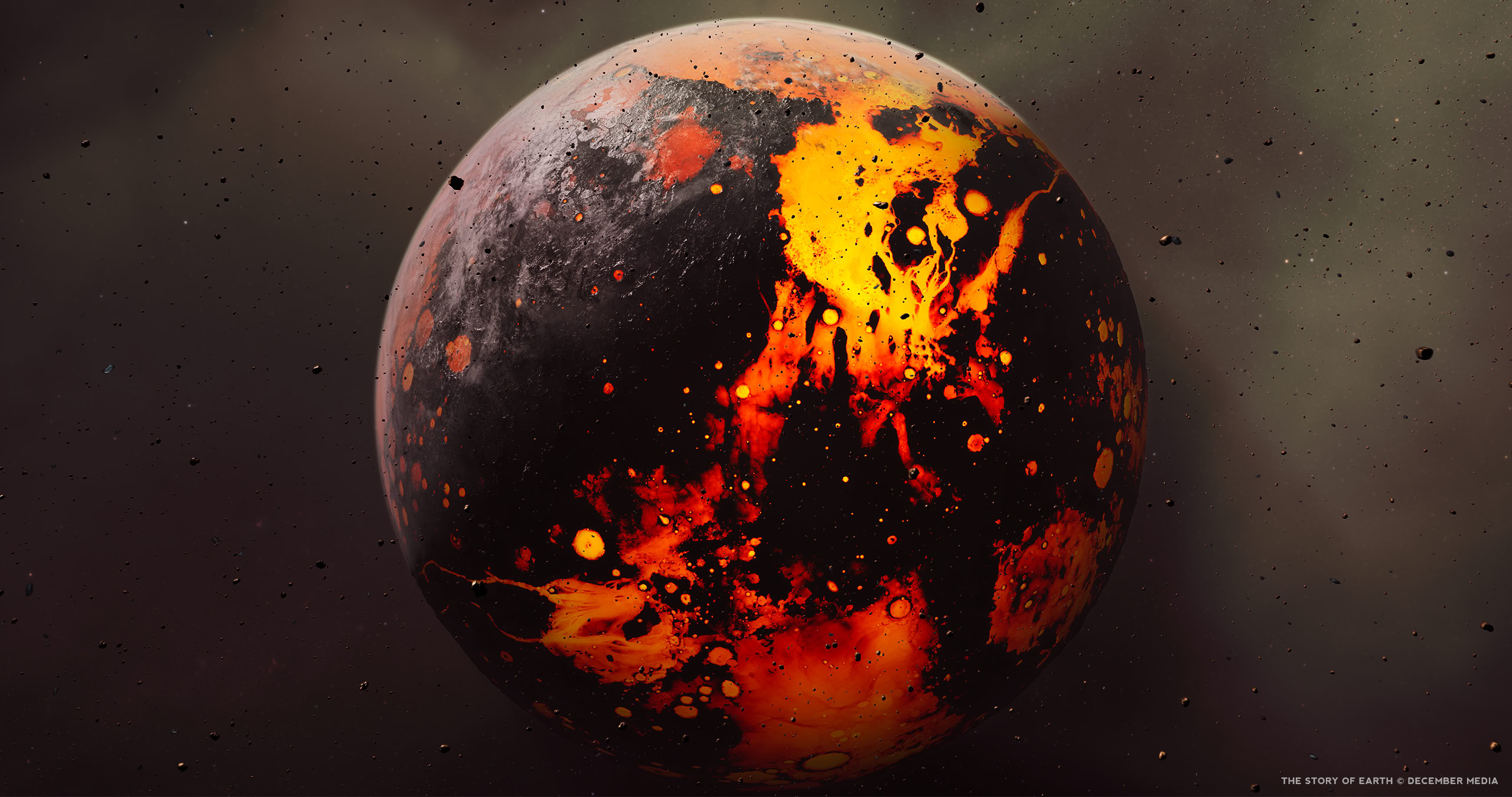

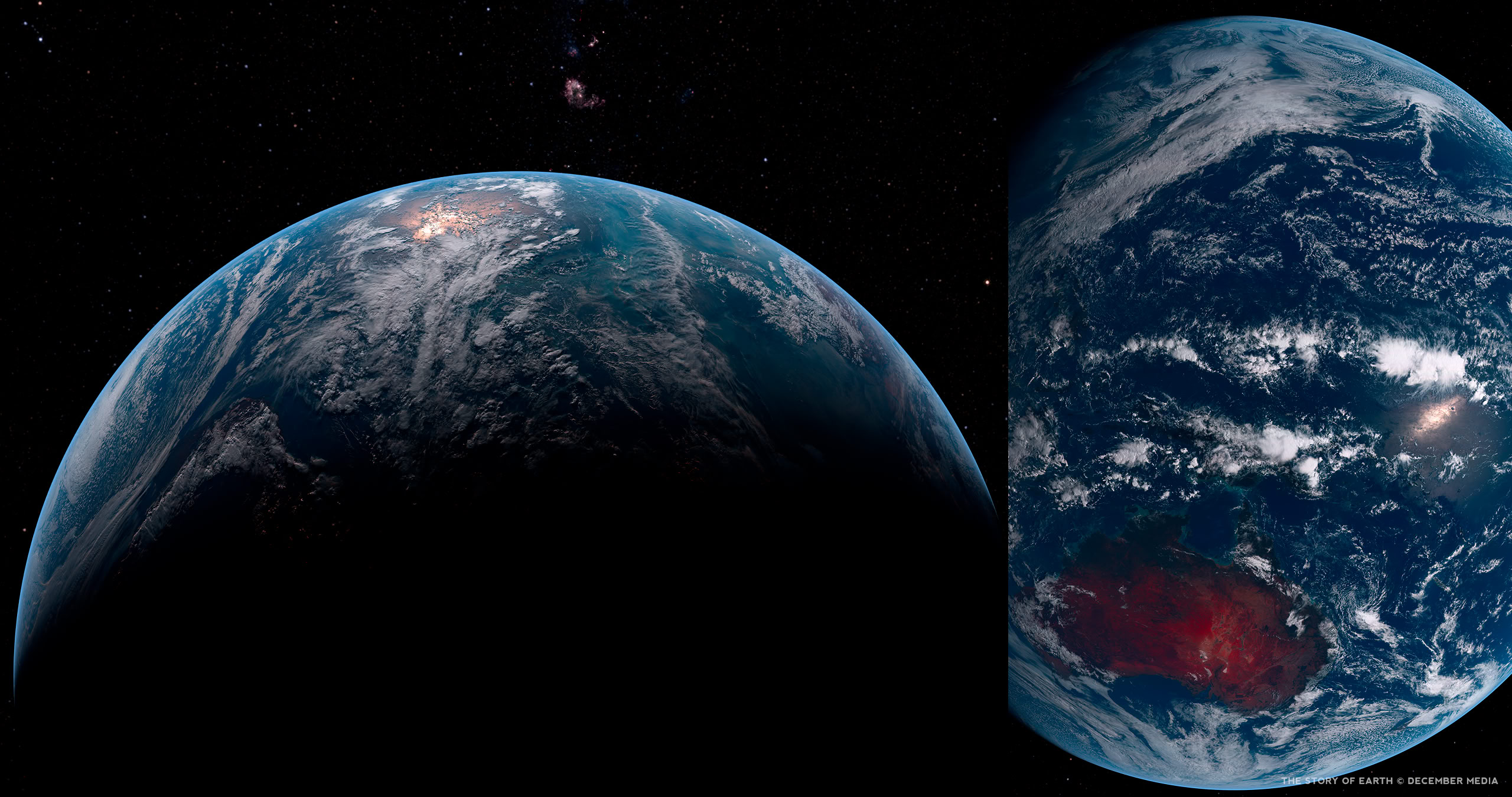

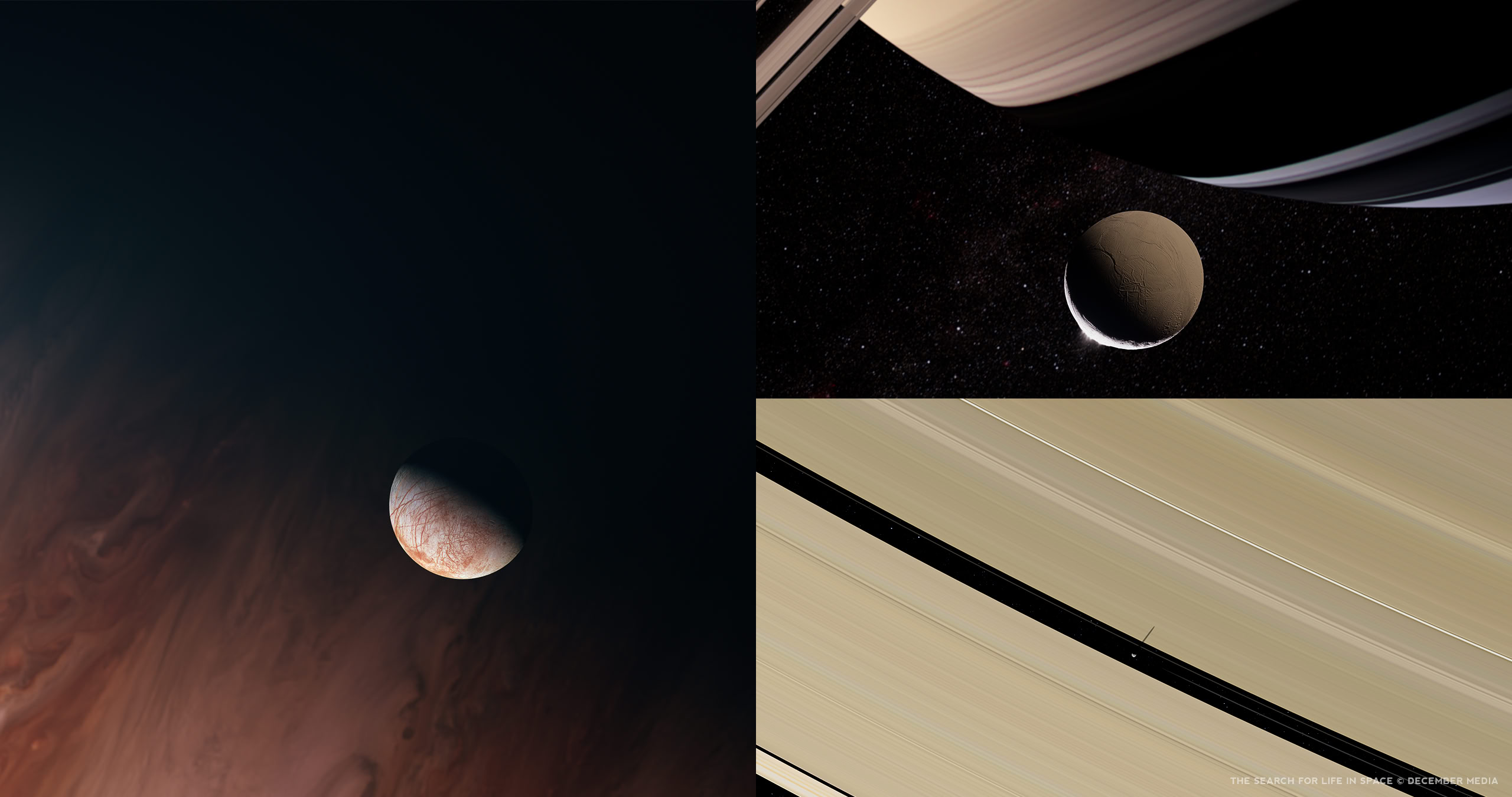

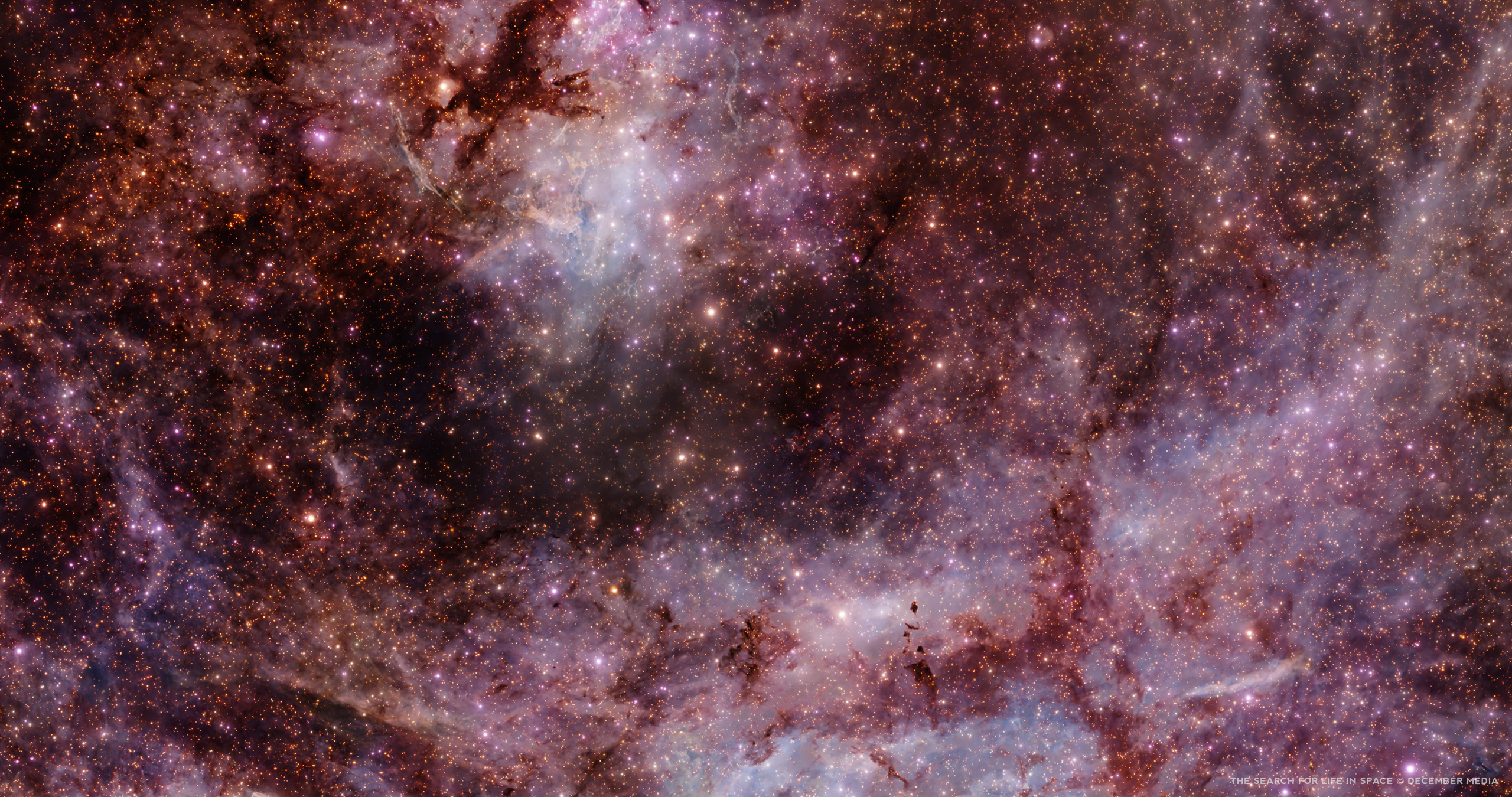

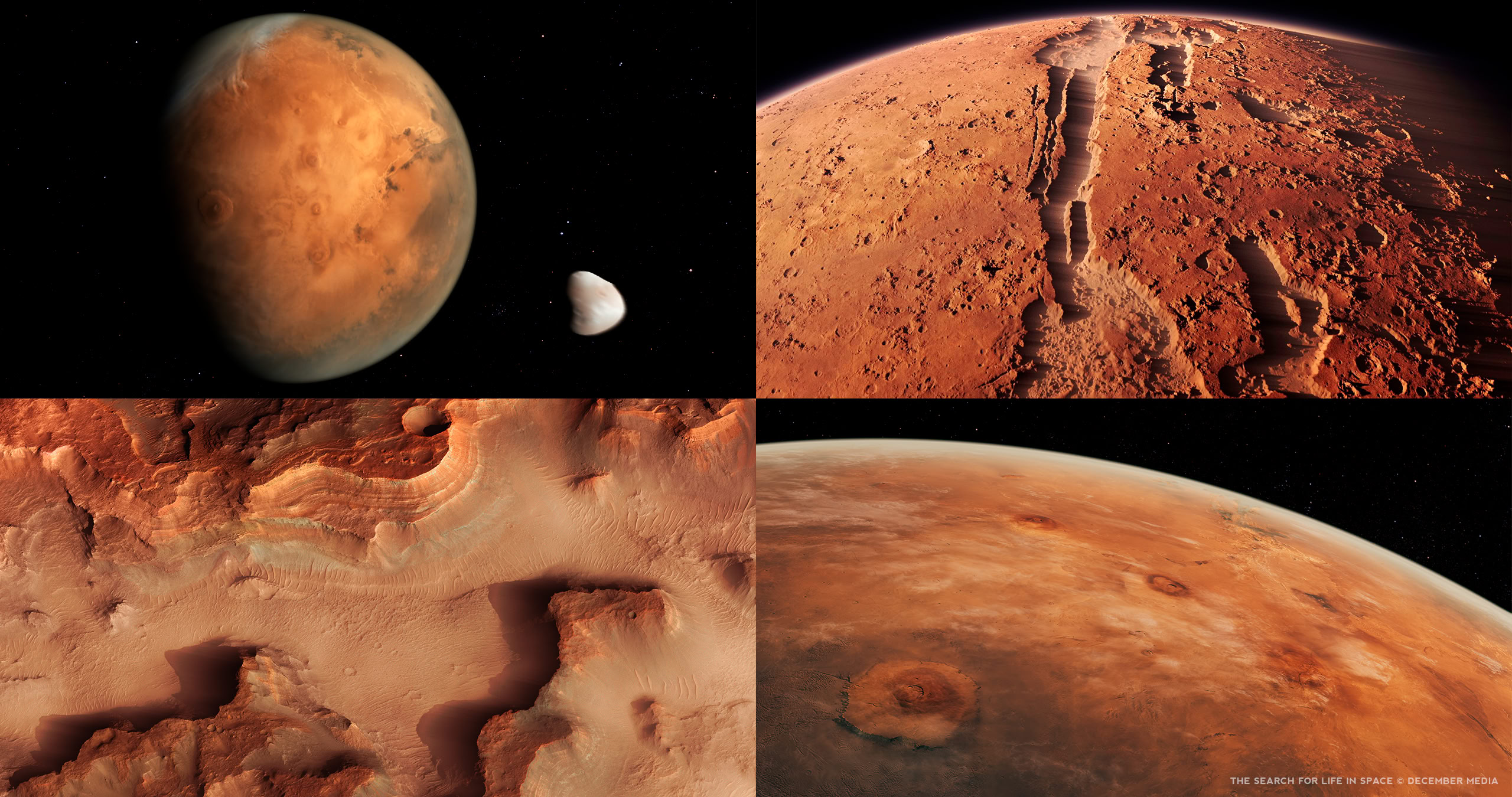

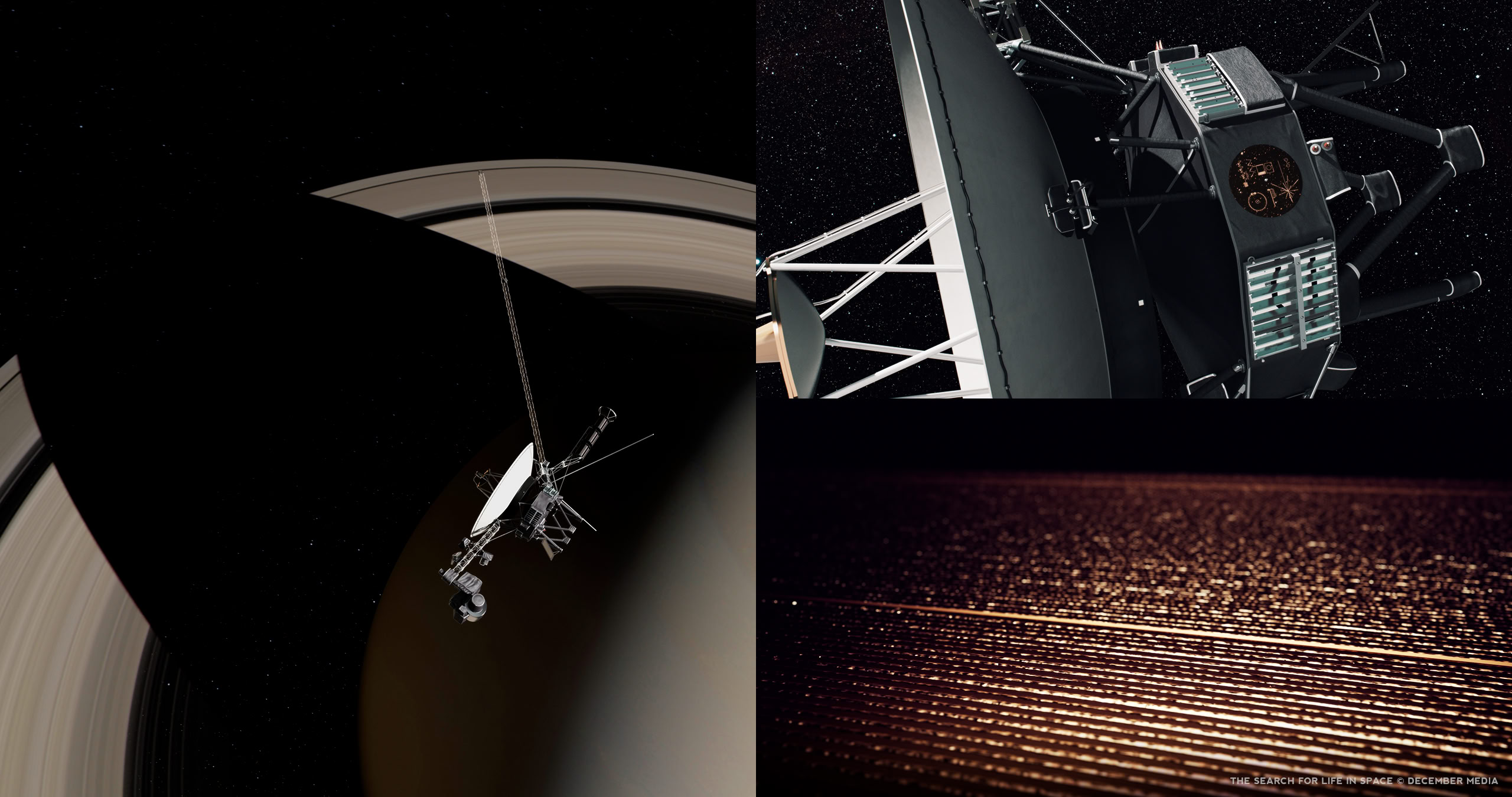

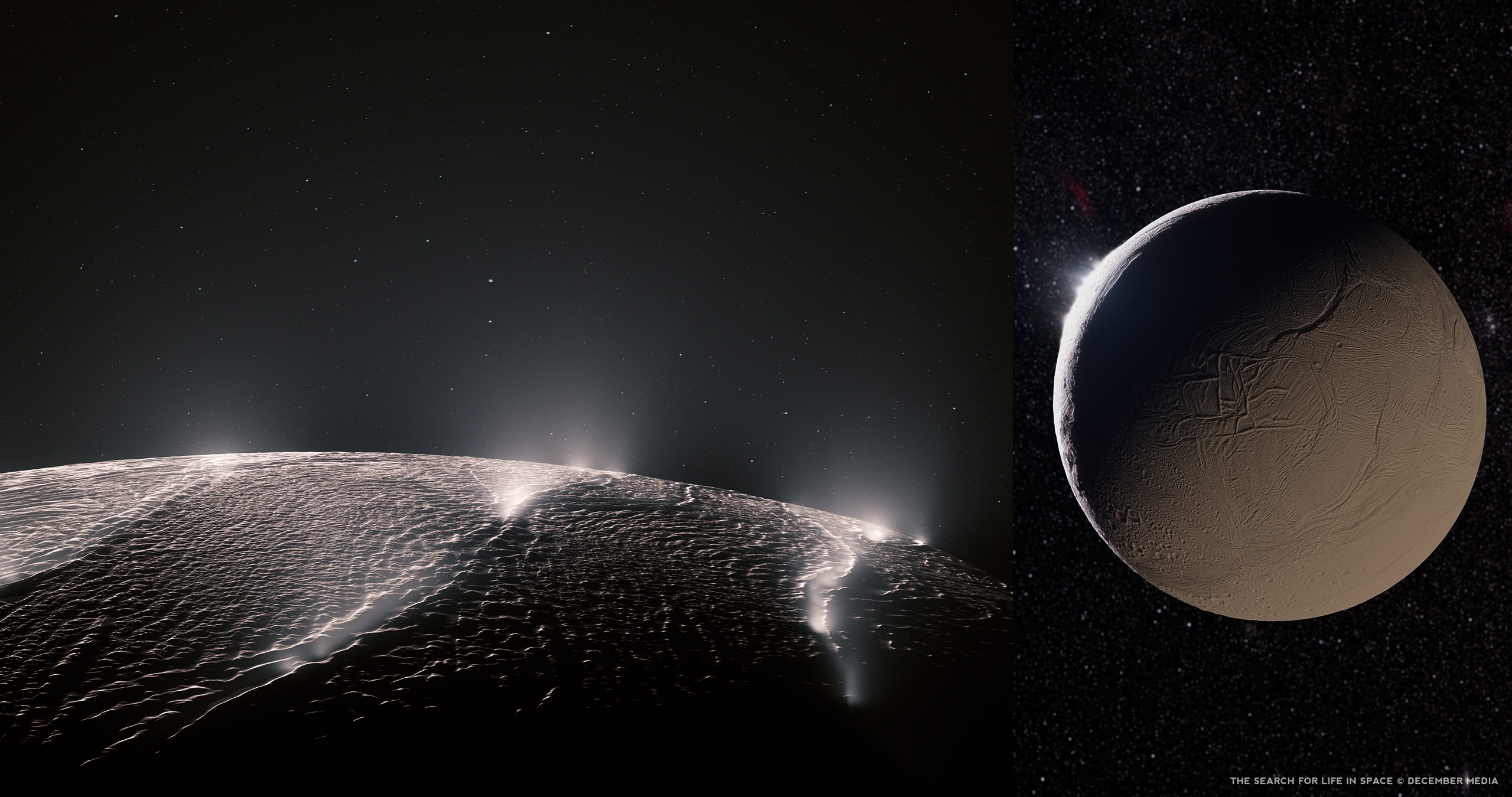

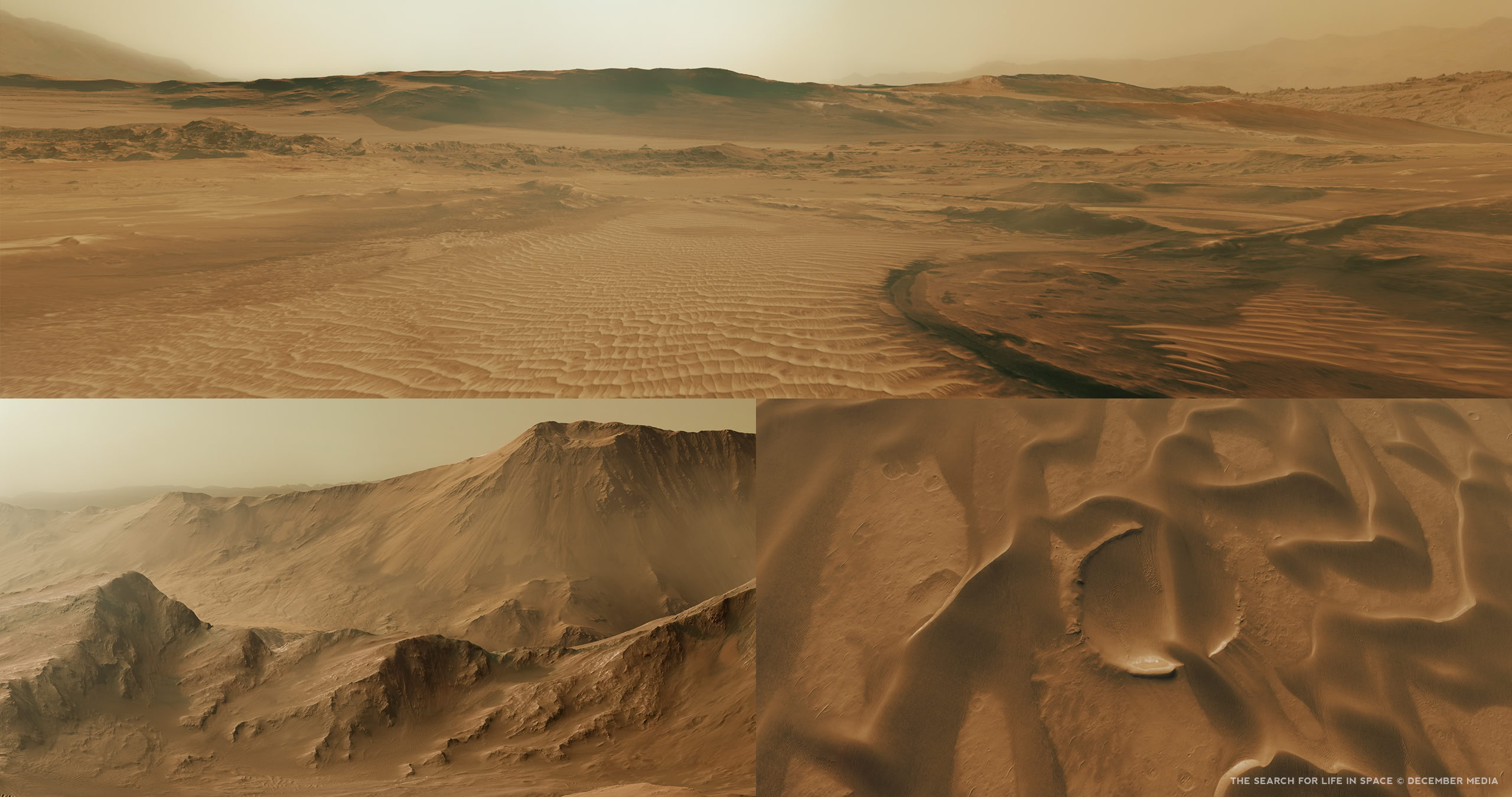

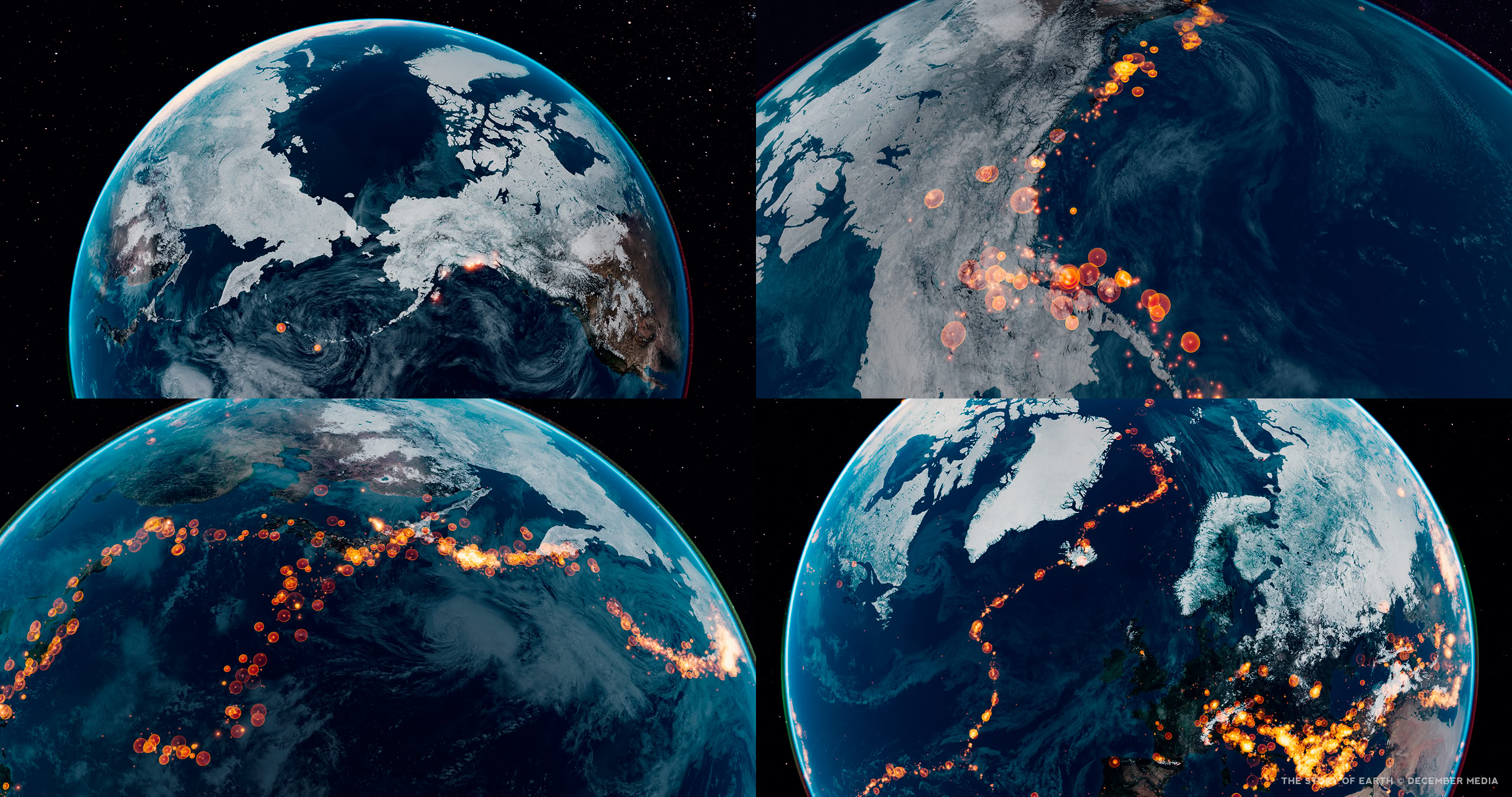

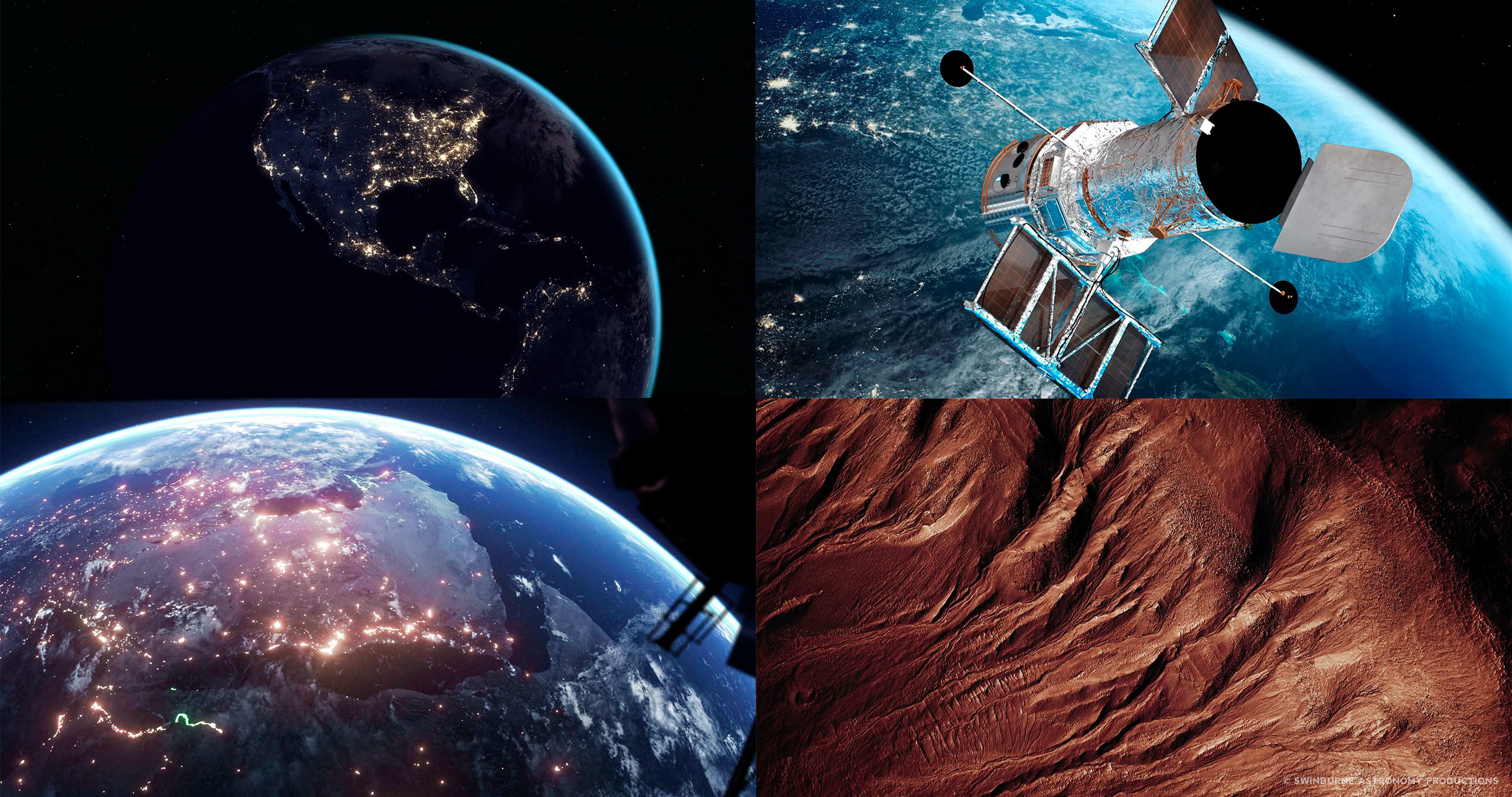

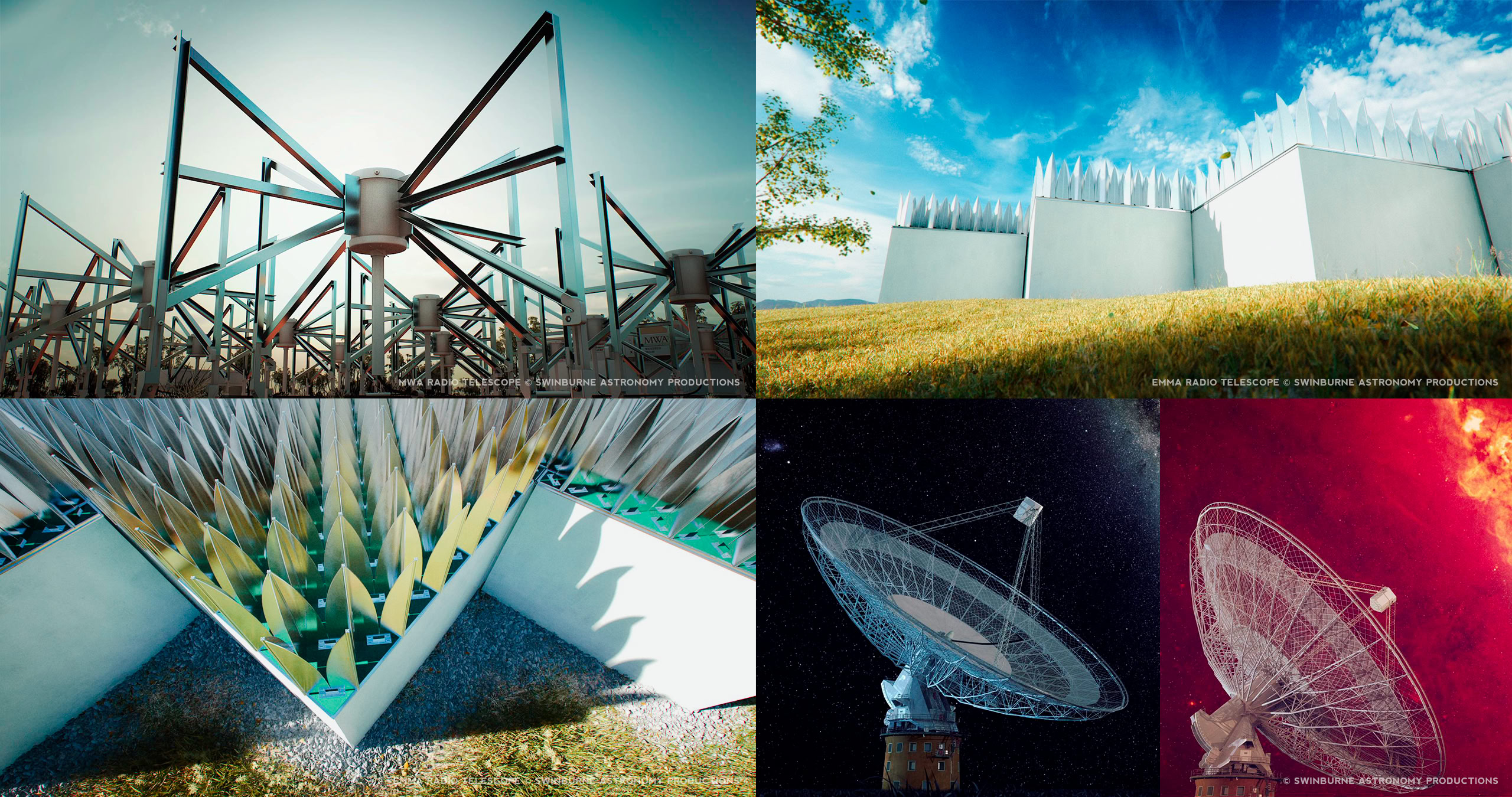

The footage shown in this reel has been selected from a few of my most recent projects. These sequences were created for a range of formats, from 8K IMAX 15/70mm, 3D digital laser, dome and television.

I have included a text overlay to highlight any aspects of these shots made in collaboration with others. Unless stated below, all work involved in the creation of these sequences is my own.

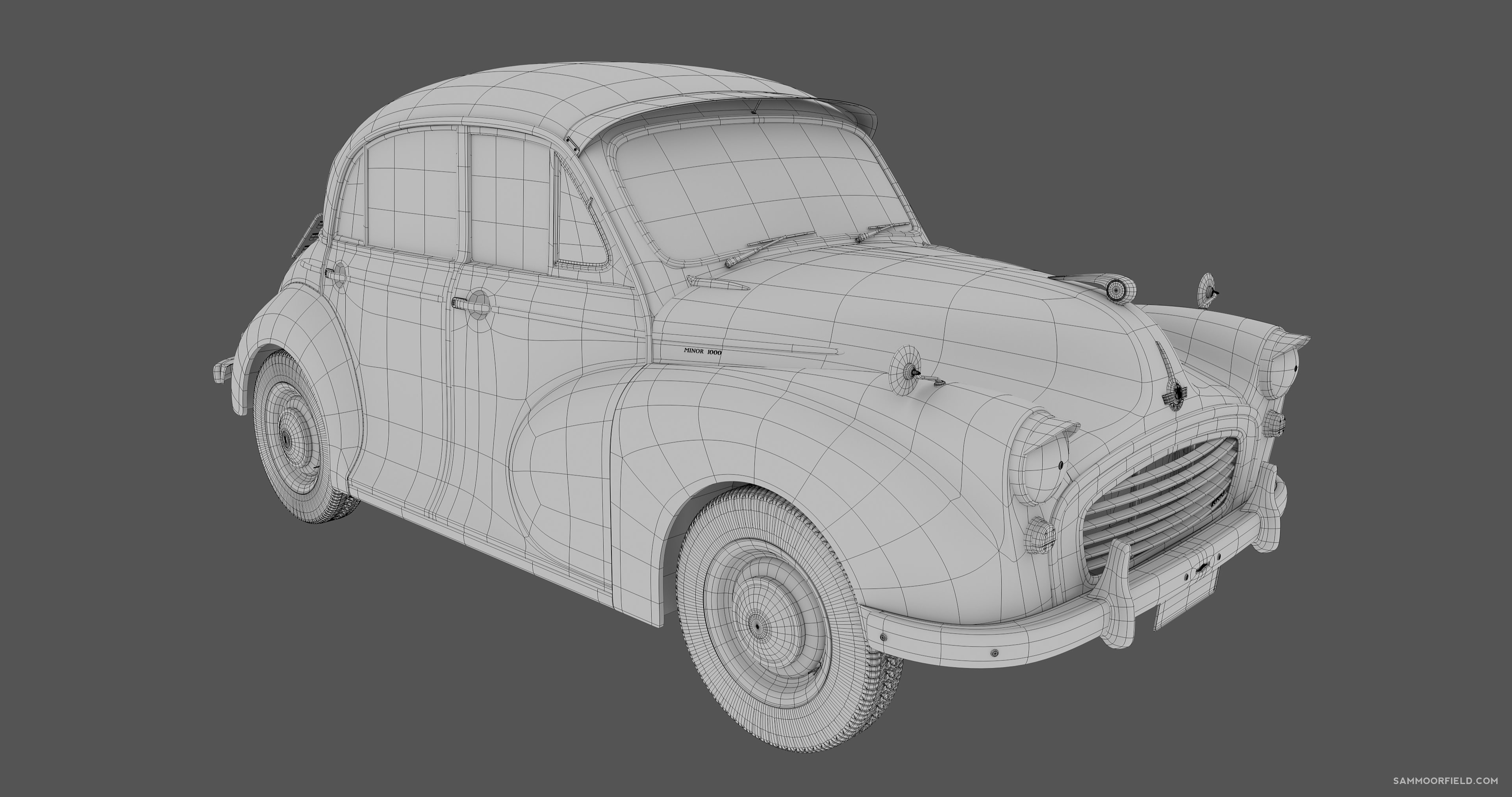

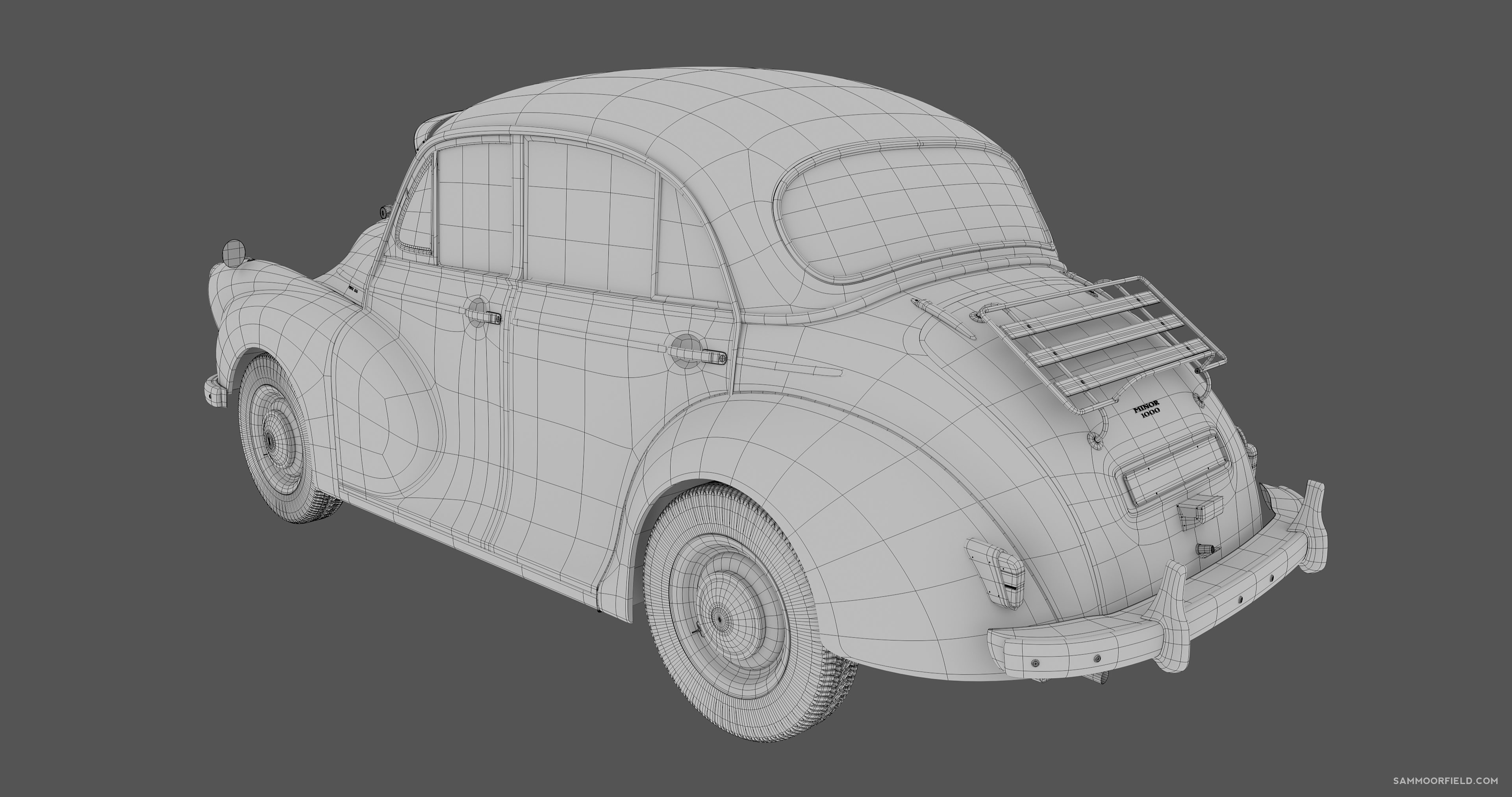

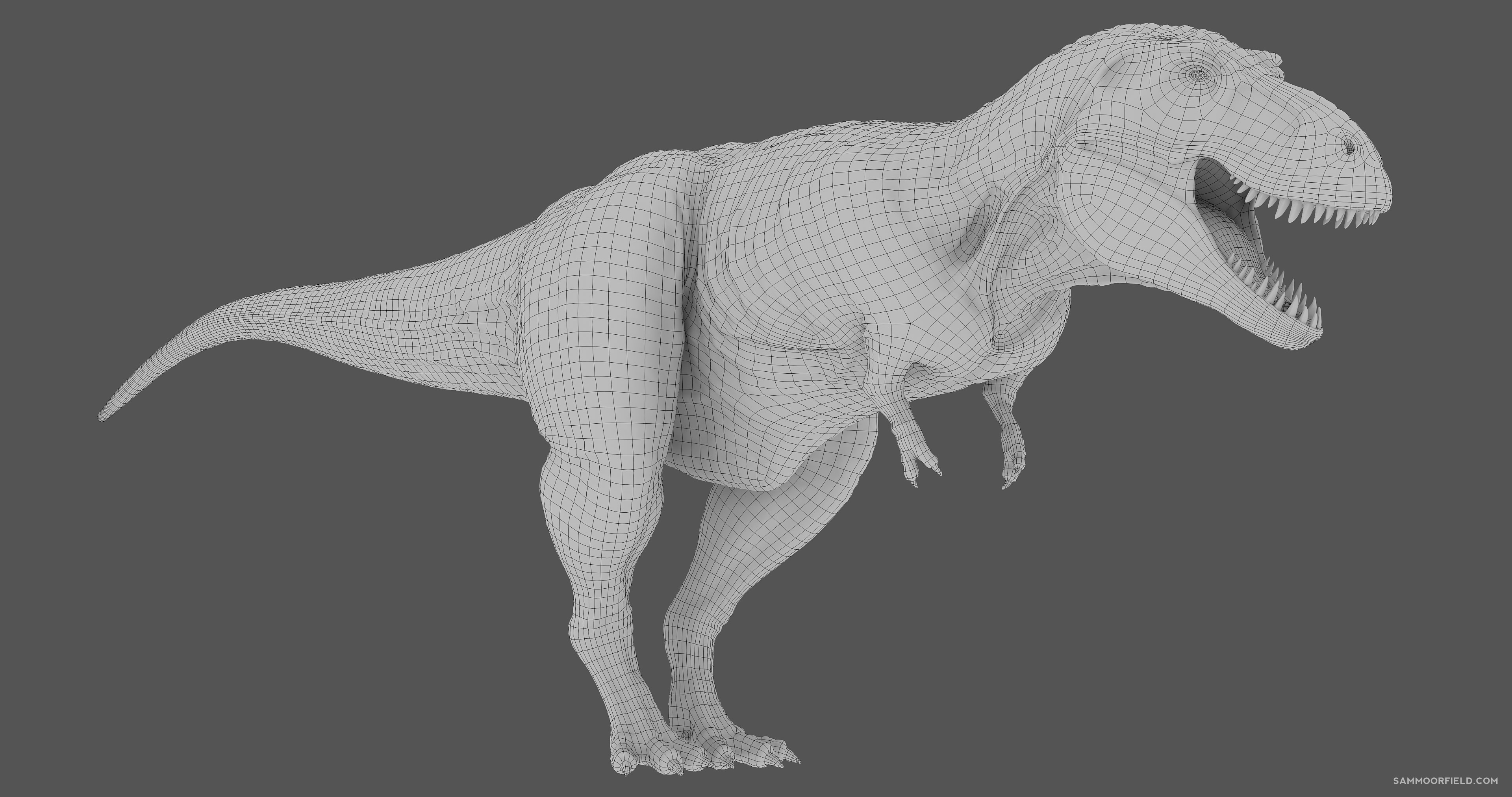

I have been developing CGI and VFX since 2005. This has involved leading teams of artists through the creation of sequences for a range of formats, from 8K IMAX 15/70 film, DCI digital cinema, dome to television. In addition to 3D and 2D animation, I have also been responsible for establishing project pipelines, scheduling and documentation.

I have been developing CGI and VFX since 2005. This has involved leading teams of artists through the creation of sequences for a range of formats, from 8K IMAX 15/70 film, DCI digital cinema, dome to television. In addition to 3D and 2D animation, I have also been responsible for establishing project pipelines, scheduling and documentation.